Brief Description

Deepfake generation technologies are rapidly evolving and are increasingly used to manipulate public opinion, attack individuals, and amplify large scale misinformation. In the next few years, generators will make synthetic faces almost indistinguishable from real ones, especially after being shared on social media platforms that apply compression, resizing, re-encoding and filtering. These widespread transformations expose a gap between lab evaluations and real-world use, underscoring the urgent need for robust deepfake detectors that remain reliable under both adversarial attacks and realistic post-processing. Our AADD Challenge 2025 at ACM MM

has shown that state-of-the-art deepfake detectors are still vulnerable to carefully crafted adversarial perturbations under controlled conditions. The AADD Challenge 2026 extends this line of work by explicitly modeling JPEG/JPEG-AI compression and social-media-like processing, making the evaluation significantly closer to real deployment scenarios. This is directly relevant to the multimedia research community, industry (social media, streaming, content platforms), and society, as it targets the robustness of forensic tools that will be critical in safeguarding information integrity over the coming years. By advancing robust deepfake forensics under adversarial and real-world constraints, AADD 2026 supports the development of dependable tools for protecting information integrity and digital trust.

Registration Process

To register for the challenge, please send an email to the main contact, Luca Guarnera (challenge.dff@gmail.com), providing the following information:

- Name of Team

- List of teammates (Name, Surname, Nationality, Organization)

- Team email

- Team referent (a teammate)

- Organization/Institution

Chairs

- Luca Guarnera, Research Fellow, luca.guarnera@unict.it, University of Catania, Italy

- Francesco Guarnera, Research Fellow, francesco.guarnera@unict.it, University of Catania, Italy

Co-Chairs

- Sebastiano Battiato, Full Professor, sebastiano.battiato@unict.it, University of Catania, Italy

- Andrea Montibeller, Research Fellow, andrea.montibeller@unitn.it ,University of Trento, Italy

- Giulia Boato, Full Professor,giulia.boato@unitn.it, University of Trento, Italy

- Giovanni Puglisi, Associate Professor, puglisi@unica.it, University of Cagliari, Italy

- Zahid Akhtar, Associate Professor, akhtarz@sunypoly.edu , State University of New York Polytechnic Institute, USA

Technical Committee

- Ludovica Beritelli, PhD Student, ludovica.beritelli@phd.unict.it, University of Catania, Italy

- Dario Pishvai, PhD Student, dario.pishvai@phd.unict.it, University of Catania, Italy

- Salvo Sambataro, PhD Student, , salvatore.sambataro@phd.unict.it, University of Catania, Italy

Main Contact

- Name : Luca Guarnera

- Email : challenge.dff@gmail.com

- Address : Dipartimento di Matematica e Informatica Cittadella Universitaria - Viale A. Doria 6 – Italy.

Important dates

To register for the challenge, please send an email to the main contact, Luca Guarnera (challenge.dff@gmail.com), providing the following information:

The test set has been released and consists of 1,600 deepfake images. Participants are required to apply adversarial attacks to these images with the goal of inducing misclassification, making them appear as real to the provided classifiers.

- Name of Team

- List of teammates (Name, Surname, Nationality, Organization)

- Team email

- Team referent (a teammate)

- Organization/Institution

List of registered teams (until now)

| Team Name | Organization/Institution | Country | |

|---|---|---|---|

| 1 | Università degli Studi di Napoli Federico II | Università degli Studi di Napoli Federico II | Italy |

| 2 | Mizhi LAb | University of Southampton China National University of Defense Technology | China |

| 3 | Quantum Dandelion | University of Science and Technology | China |

| 4 | Team KK | Independent Research Group | United States Canada |

| 5 | Harmony Forge | Beijing Union University | China |

| 6 | GhostChamber | IISER Bhopal | India |

| 7 | FakeBusters@UNISA | Università degli Studi di Salerno | Italy |

| 8 | MMC Lab | Hallym University | South Korea |

| 9 | MSU Team | MSU Institute for Artificial Intelligence | Russia |

| 10 | BalzanaBits | University of Siena | Italy |

| 11 | CASALab | MSU Università degli Studi di Salerno | Italy |

| 12 | WHU_PB | Wuhan University | China |

| 13 | StaySword | Wuhan Textile University | China |

| 14 | AdverSiena | WUniversity of Siena | Italy |

| 15 | ELO | ELO Lab, University of Information Technology (UIT) | Vietnam |

| 16 | Fraunhofer SIT | Fraunhofer SIT | Centro ATHENE | Germany |

| 17 | WayForWind | Xihua University | China |

| 18 | Safe AI | Ulsan National Institute of Science and Technology (UNIST) | South Korea |

| 19 | SN Group | School of Computer and Software Engineering, Xihua University | China |

Along with the test set, two out of the eight trained classifiers have been made available. It is important to note that some of the classifiers—including one of the two released—have been trained on the frequency components of the images.

An evaluation script is also provided. This script is intended to help participants understand the required structure and format for submitting the attacked images.

Finally, each team is allowed to submit only one attacked test set for evaluation. In the case of multiple submissions, only the last submission made before the deadline will be considered for the final evaluation.

By this date, all participants are required to submit the following:

- Attacked Test Set: A version of the provided test set that reflects the participant’s attack strategy, following the challenge guidelines.

- Abstract Paper: A short abstract paper (1–2 pages) that briefly describes the methodology, motivation, and key contributions of the proposed approach.

Submissions must be made sent an email to the challenge referrent Luca Guarnera (challenge.dff@gmail.com).

Attacked Test Set of each Team will be randomly modeled through JPEG/JPEG-AI compressions and social-media-like processing before to be evaluated through the six trained classifiers.

- Attacked Test Set: A version of the provided test set that reflects the participant’s attack strategy, following the challenge guidelines.

- Abstract Paper: A short abstract paper (1–2 pages) that briefly describes the methodology, motivation, and key contributions of the proposed approach.

Submissions must be made sent an email to the challenge referrent Luca Guarnera (challenge.dff@gmail.com).

The top 3 teams will be invited to submit a full-length paper describing their method in detail. This paper will undergo a review process managed by the challenge organizers.

The organizers will notify the top 3 teams about the outcome of the review process.

Based on the reviews, zero, one, more, or all of the submitted papers may be accepted for inclusion in the ACM Multimedia 2025 proceedings.

Evaluation criteria

- Requirement & Submission

Each submission must include original deepfake images and their perturbed versions. Only complete image pairs will be evaluated. - Accuracy Calculation

An attacked image is a positive case if a detection system misclassifies it as "real." Accuracy is the proportion of these cases within the dataset. - Final Score Composition

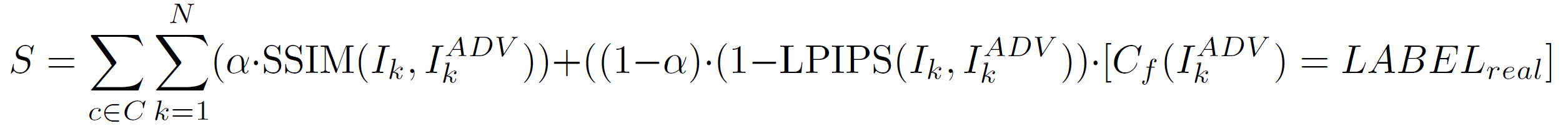

The score is a weighted average of SSIM, LPIPS and detection accuracy (across all classifiers). The final score is calculates as follow:

where:- C is the set of all classifiers

- N is the number of deepfake images in the test dataset

- Ik is the k-th image from the deepfake test dataset

- IkADV is the adversarial image generated from Ik

- LABELreal is the label of class real

- [] is the indicator function which equals to 1 when predicate is true, otherwise equals to 0

- α is equal to 0.5

List of AADD Challenge editions

- 1st Adversarial Attacks on Deepfake Detectors: A Challenge in the Era of AI-Generated Media - Grand Challenege at ACM Multimedia 2025 - Paper link

@inproceedings{battiato2025adversarial,

title={Adversarial Attacks on Deepfake Detectors: A Challenge in the Era of AI-Generated Media (AADD-2025)},

author={Battiato, Sebastiano and Casu, Mirko and Guarnera, Francesco and Guarnera, Luca and Puglisi, Giovanni and Pontorno, Orazio and Ragaglia, Claudio Vittorio and Akhtar, Zahid},

booktitle={Proceedings of the 33rd ACM International Conference on Multimedia},

pages={13714--13719},

year={2025}

}